EchoGuide: Active Acoustic Guidance for LLM-Based Eating Event Analysis from Egocentric Videos

Published in The Proceedings of the International Symposium on Wearable Computers (ISWC), 2024

Recommended citation: Vineet Parikh, Saif Mahmud, Devansh Agarwal, Ke Li, François Guimbretière, and Cheng Zhang. 2024. EchoGuide: Active Acoustic Guidance for LLM-Based Eating Event Analysis from Egocentric Videos. In Proceedings of the 2024 ACM International Symposium on Wearable Computers (ISWC). Association for Computing Machinery, New York, NY, USA, 40–47. https://dl.acm.org/doi/10.1145/3675095.3676611

Best Paper Honorable Mention Award

October 5-9, 2024, Melbourne, Australia

Keyword: Eating Detection, Acoustic Sensing, Activity Recognition, Foundation Models

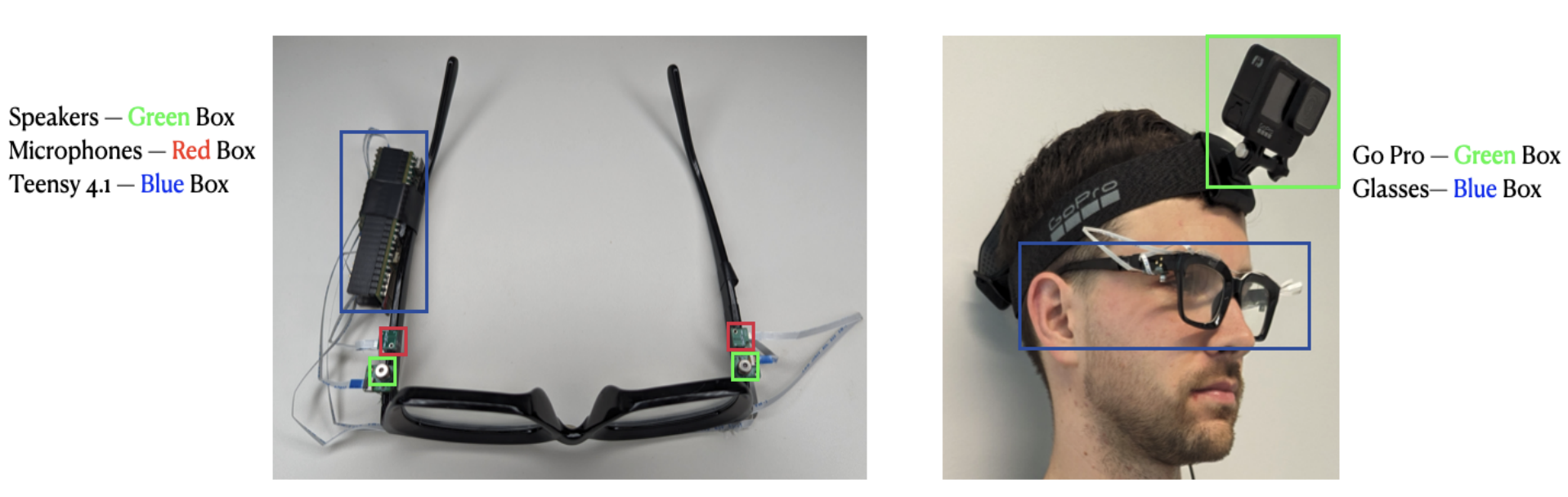

Self-recording eating behaviors is a step towards a healthy lifestyle recommended by many health professionals. However, the current practice of manually recording eating activities using paper records or smartphone apps is often unsustainable and inaccurate. Smart glasses have emerged as a promising wearable form factor for tracking eating behaviors, but existing systems primarily identify when eating occurs without capturing details of the eating activities (E.g., what is being eaten). In this paper, we present EchoGuide, an application and system pipeline that leverages low-power active acoustic sensing to guide head-mounted cameras to capture egocentric videos, enabling efficient and detailed analysis of eating activities. By combining active acoustic sensing for eating detection with video captioning models and large-scale language models for retrieval augmentation, EchoGuide intelligently clips and analyzes videos to create concise, relevant activity records on eating. We evaluated EchoGuide with 9 participants in naturalistic settings involving eating activities, demonstrating high-quality summarization and significant reductions in video data needed, paving the way for practical, scalable eating activity tracking.