EyeEcho: Continuous and Low-power Facial Expression Tracking on Glasses

Published in The Proceedings of the CHI Conference on Human Factors in Computing Systems (CHI), 2024

Recommended citation: Ke Li, Ruidong Zhang, Siyuan Chen, Boao Chen, Mose Sakashita, Francois Guimbretiere, and Cheng Zhang. 2024. EyeEcho: Continuous and Low-power Facial Expression Tracking on Glasses. In Proceedings of the 2024 CHI Conference on Human Factors in Computing Systems (CHI). Association for Computing Machinery, New York, NY, USA, Article 319, 1–24. https://dl.acm.org/doi/10.1145/3613904.3642613

Video preview, Presentation video

Selected Media Coverage: Cornell Chronicle

May 11-16, 2024, Honolulu, Hawaiʻi, USA

Keyword: Eye-mounted Wearable, Facial Expression Tracking, Acoustic Sensing, Low-power

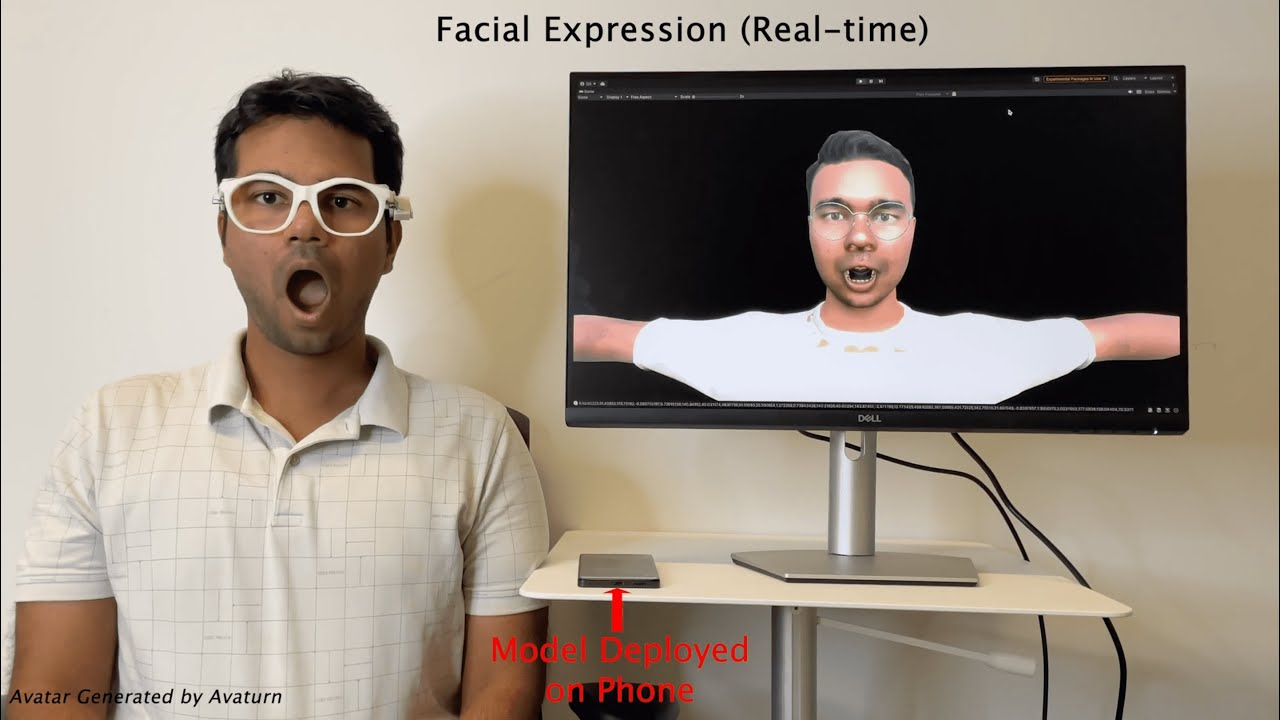

In this paper, we introduce EyeEcho, a minimally-obtrusive acoustic sensing system designed to enable glasses to continuously monitor facial expressions. It utilizes two pairs of speakers and microphones mounted on glasses, to emit encoded inaudible acoustic signals directed towards the face, capturing subtle skin deformations associated with facial expressions. The reflected signals are processed through a customized machine-learning pipeline to estimate full facial movements. EyeEcho samples at 83.3 Hz with a relatively low power consumption of 167 mW. Our user study involving 12 participants demonstrates that, with just four minutes of training data, EyeEcho achieves highly accurate tracking performance across different real-world scenarios, including sitting, walking, and after remounting the devices. Additionally, a semi-in-the-wild study involving 10 participants further validates EyeEcho’s performance in naturalistic scenarios while participants engage in various daily activities. Finally, we showcase EyeEcho’s potential to be deployed on a commercial-off-the-shelf (COTS) smartphone, offering real-time facial expression tracking.